SOAPSonar – Testing SOAP, REST or JSON Services

” What is the difference Testing a SOAP Services vs. JSON/REST or other service using SOAPSonar” After trying to answer this question verbally 3 times in the last week, I thought it a good idea to show it in a post.

- SOAP – “Simple Object Access Protocol” usually uses XML, and has WSDL. It also has an explicit error format or SOAP Fault messages. It tends to be heavier weight and services are often far larger.

- REST – Representational state transfer is a software architectural style consisting of a coordinated set of architectural constraints applied to components, connectors, and data elements, within a distributed hypermedia system of which JSON is one language.

- JSON – JavaScript Object Notation, uses readable text (not tue XML)to transmit data objects, consisting of attribute–value pairs. JSON does not use WSDL (Similar WADL is unpopular, in draft and seldom used), but usually uses a service description document. JSON Schema is also seldom currently used. JSON has no explicit error format. This makes JSON light weight and ideal for mobile applications.

So what does that really mean for someone using SOAPSonar?

The Difference

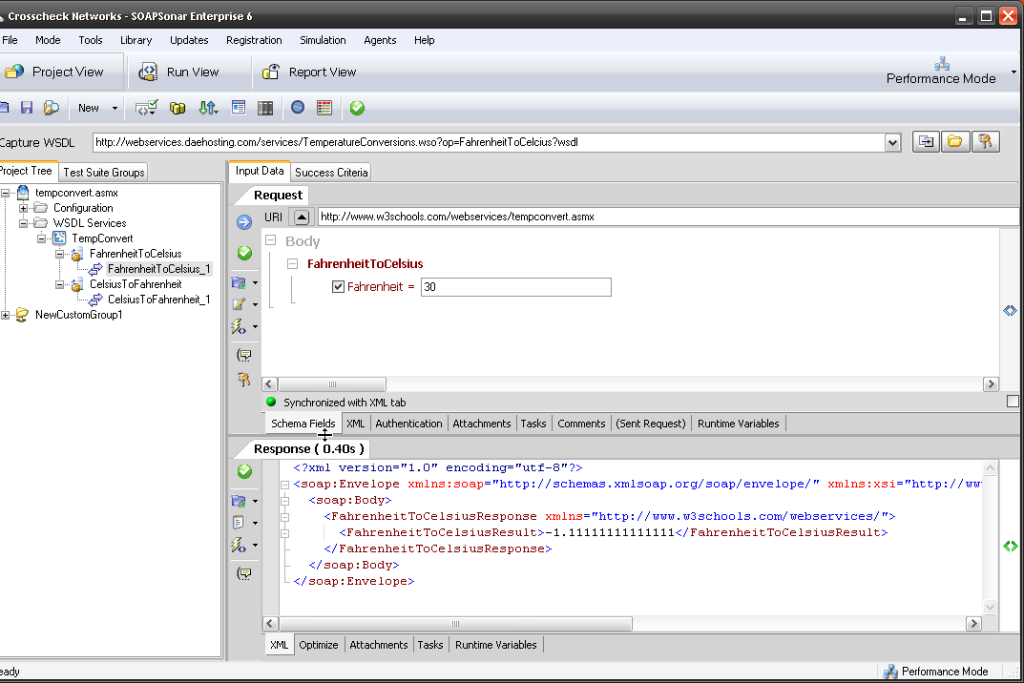

With a SOAP Service

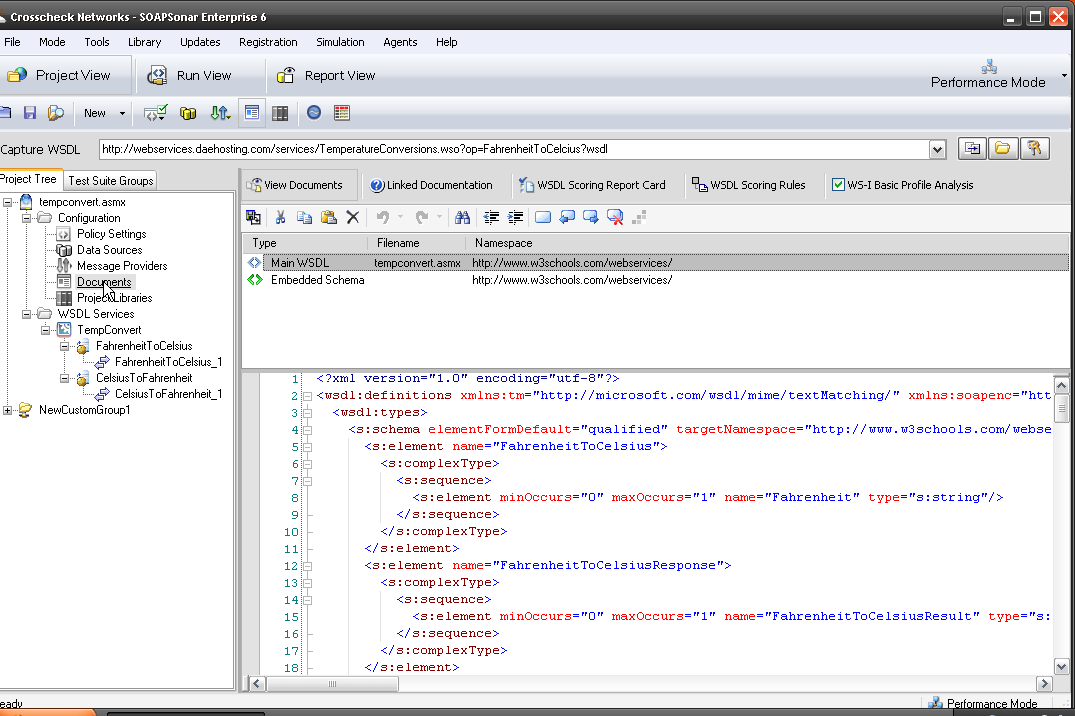

You can use the capture WSDL bar and enter the URI, with ?wsdl afterwards and discover all the available services. Try it now with

http://www.w3schools.com/webservices/tempconvert.asmx?wsdl.

Notice the TempConvert and two services are automatically populated. When you select FahrenheitToCelsius_1, notice the Body is populated with field in SOAPSonar. If you enter a value, commit and send, you get your XML response.

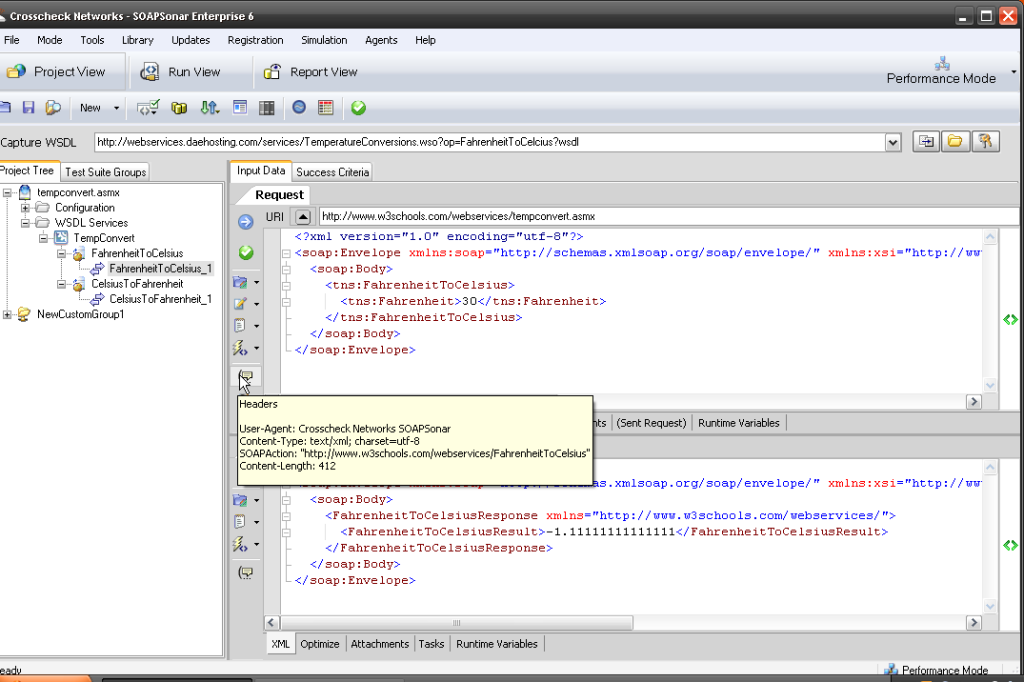

SOAPSonar offers a way to view the XML request, using the tab labelled XML and request headers. The same is possible in JSON, also headers tend to be lighter weight

You can Also go to Documents and View Schema, which most likey does not exist in JSON

With A JSON Service

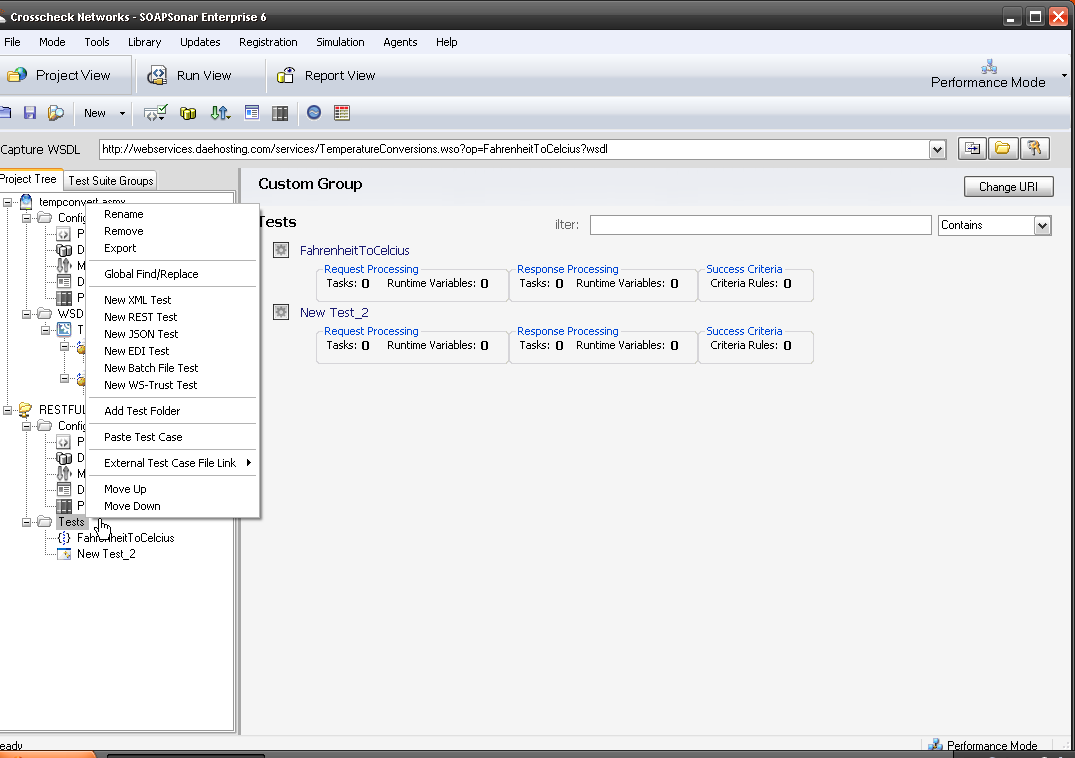

There is no WSDL that can be captured and the chances are there is no schema. This means it is not possible for a Tester to automatically discover services in the same way. In SOAPSonar, we start by selecting File, New Test Group and then we have to name the test group. We can then Add a new test, by right-click, New JSON Test or more generic New REST Test and then naming each one.

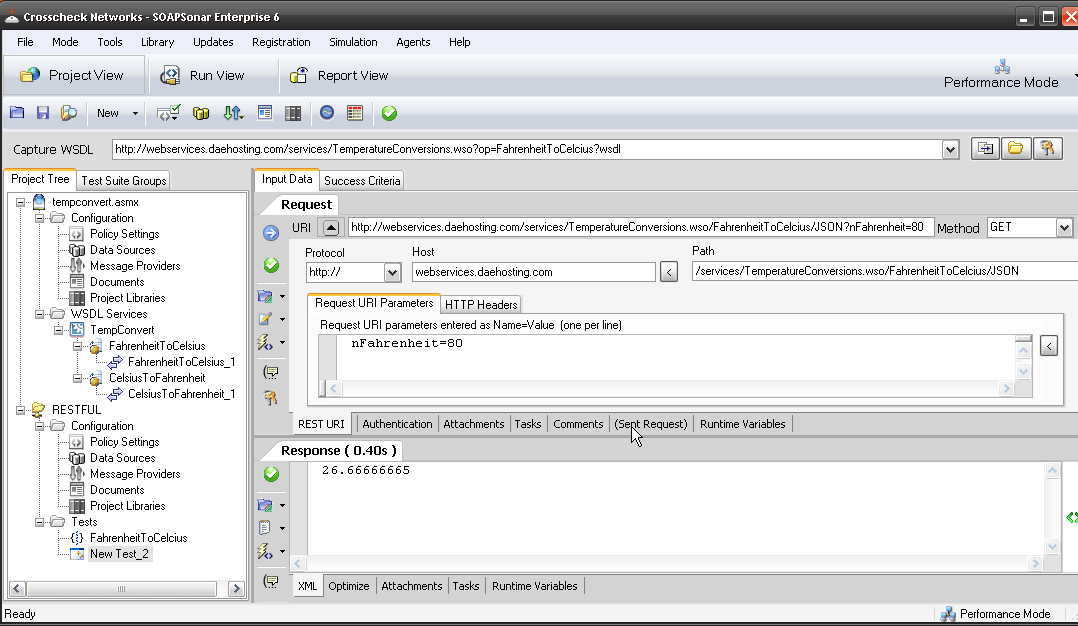

We then need a URI, the query parameters and the Method. Lets use

http://webservices.daehosting.com/services/TemperatureConversions.wso/FahrenheitToCelcius/JSON

as the URI and ?nFahrenheit=decimal as parameter to send and GET as the method. Then for 80 as the value, we replace Decimal with 80. How do I know this?, I read the document definition and example. The REST view in SOAPSonar would be as below. Notice the body of the request is frequently empty

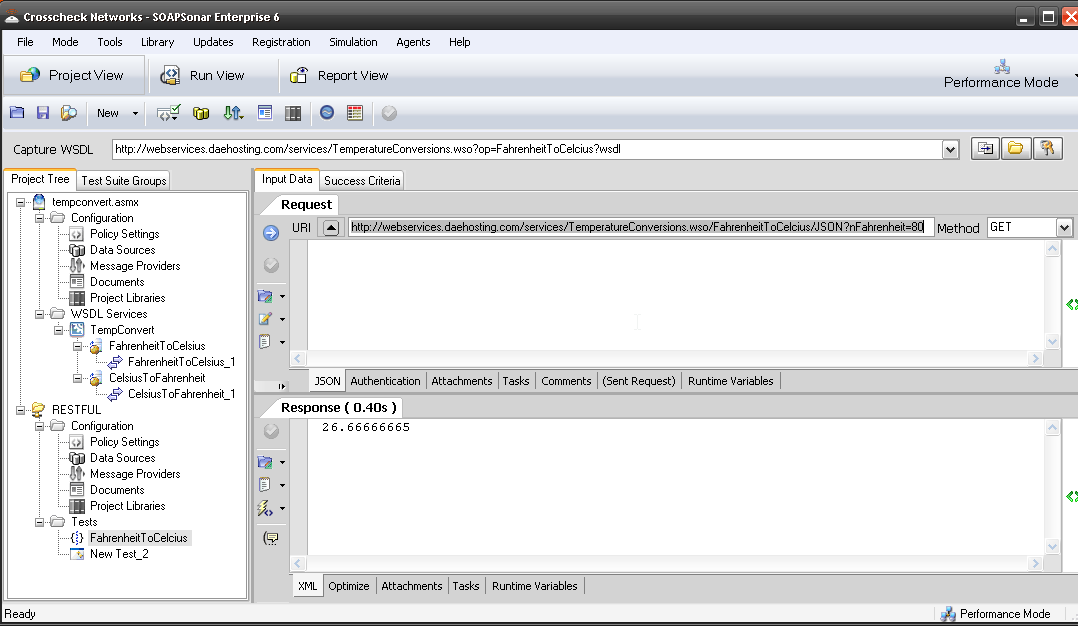

The JSON view, is a single query line in the URI, and the Method. There is no WSDL to View and although incorrect queries will error, the description is limited.

So how then do Testers know what to Test? Its usually one of 4 ways

- The tester reviews the JSON code and looks for all URI, Methods and attribute–value pairs and reverse engineers tests cases. This takes significant JSON knowledge

- The Tester relies on the service description document, which should define all attribute–value pairs, Methods, URI’s and Query Strings. This requires good documentation.

- The developer and / or tester (Agile facilitates this) create and define the unit tests together. This unit test is then used to validate the basic functionality of the each function by both developer and tester. The tester, then adds ADS, chains functions, tests negative scenarios, load, and all additional aspects of the function to get the desired coverage

- You embrace yet to be standards of JSON Schema (tough given its level of maturity)

With JSON services, defining success criteria is also extremely valuable, due to the lack explicit error format. Its also far easier far developer to make minor changes to code, as they dont need to update schema, making regression testing important.

The Same

So now that we covered the differences, the rest is much of the same. Lighter weight JSON services tend to be much smaller and services and the very easy structure to understand. Be it SOAP or REST, SOAPSonar (and CloudPort) will identify all the variables and display them in the same manner.

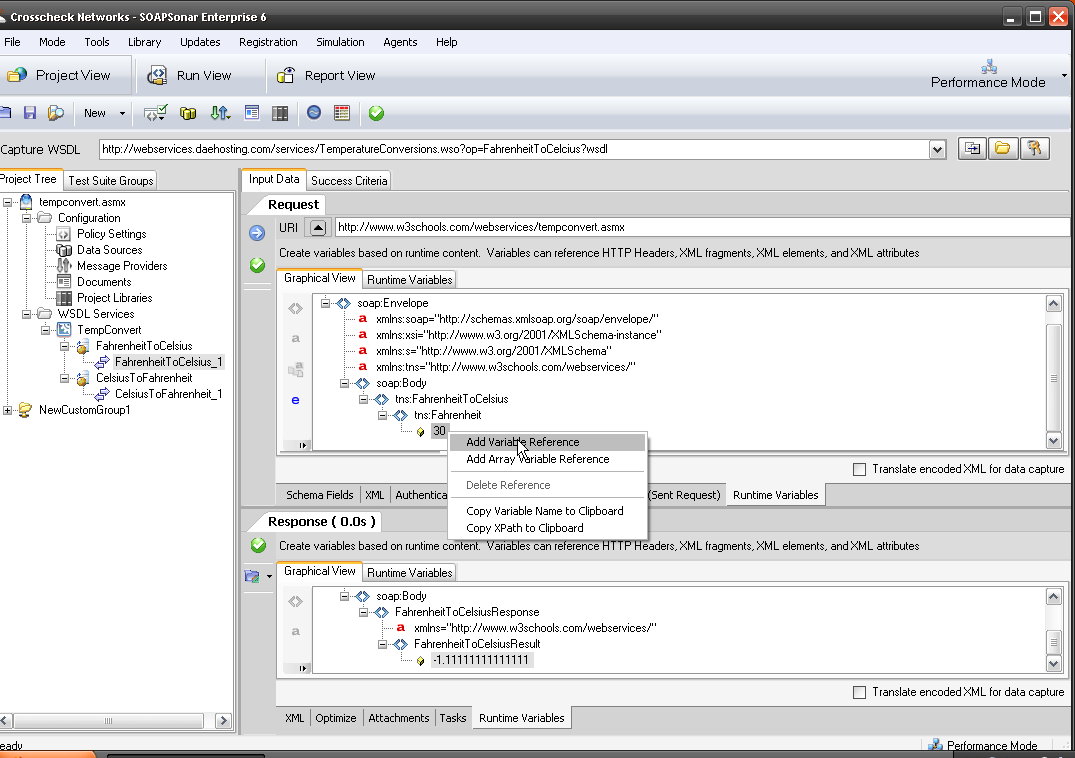

Here is what that SOAP service looks like in graphical view for both request and response in the Runtime Variables tab. Any of these variables can now be used for chaining, automation data sources, success criteria, regression and a variety of testing options, using the right-click option.

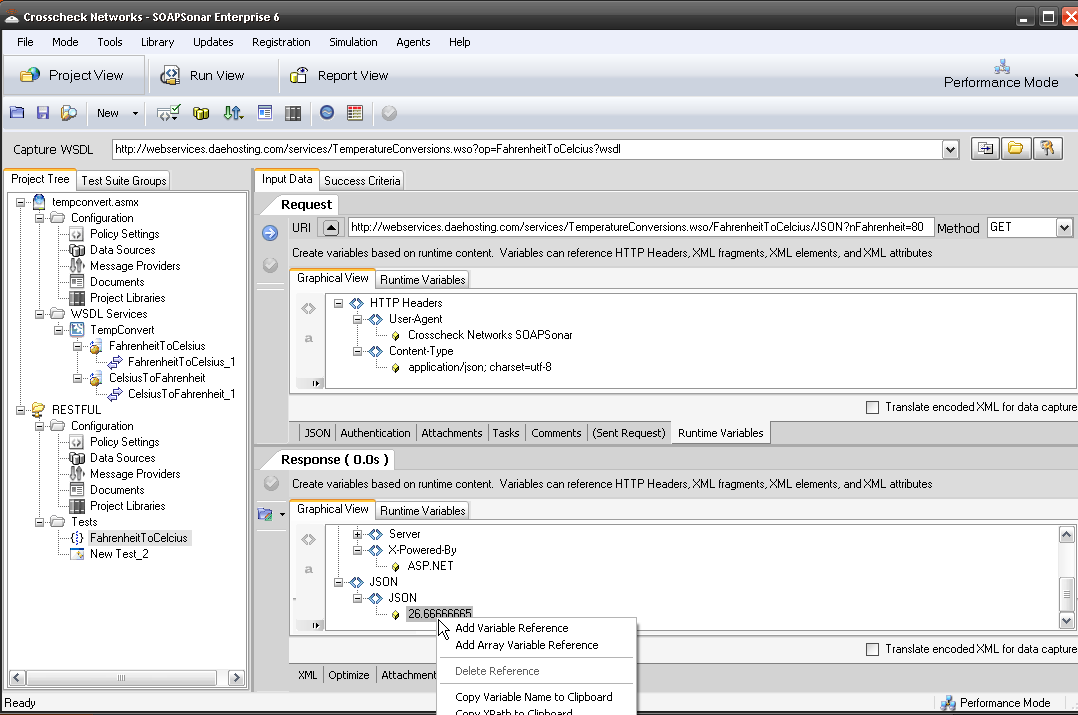

Here is what the JSON service looks like for the graphical view for both request and response in the Runtime Variables tab. Any of these variables can also be used in the same way as SOAP, for chaining, automation data sources, success criteria, regression and a variety of testing options, using the right-click option.

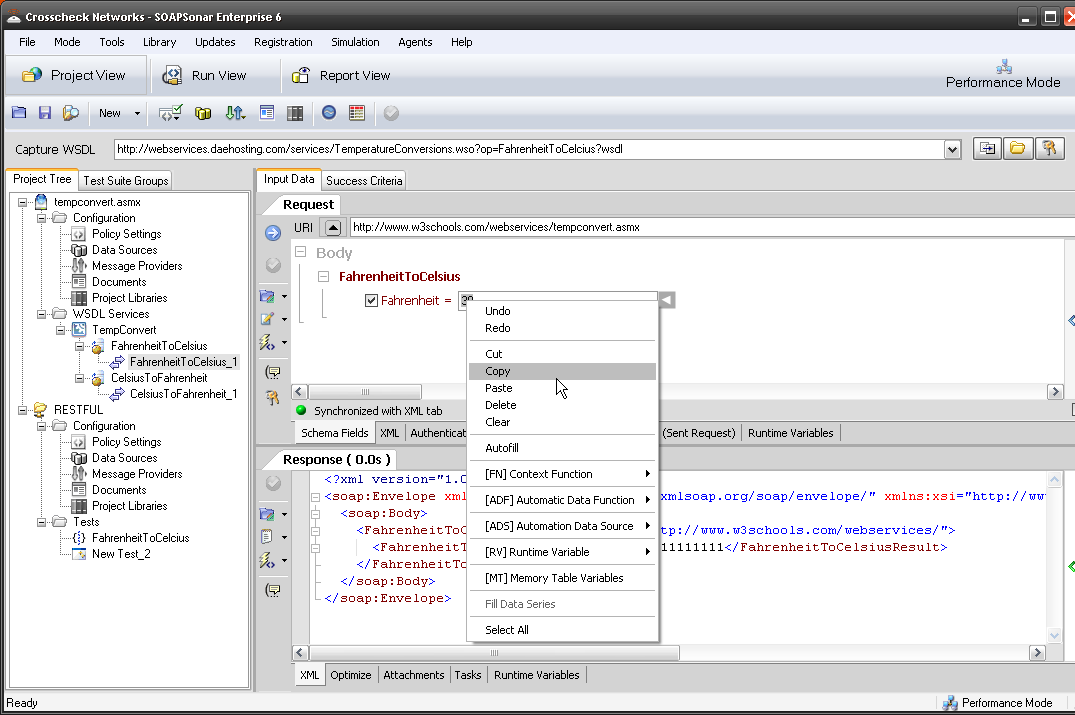

If you wish to use a variable with SOAP, you right-click and add it in the field.

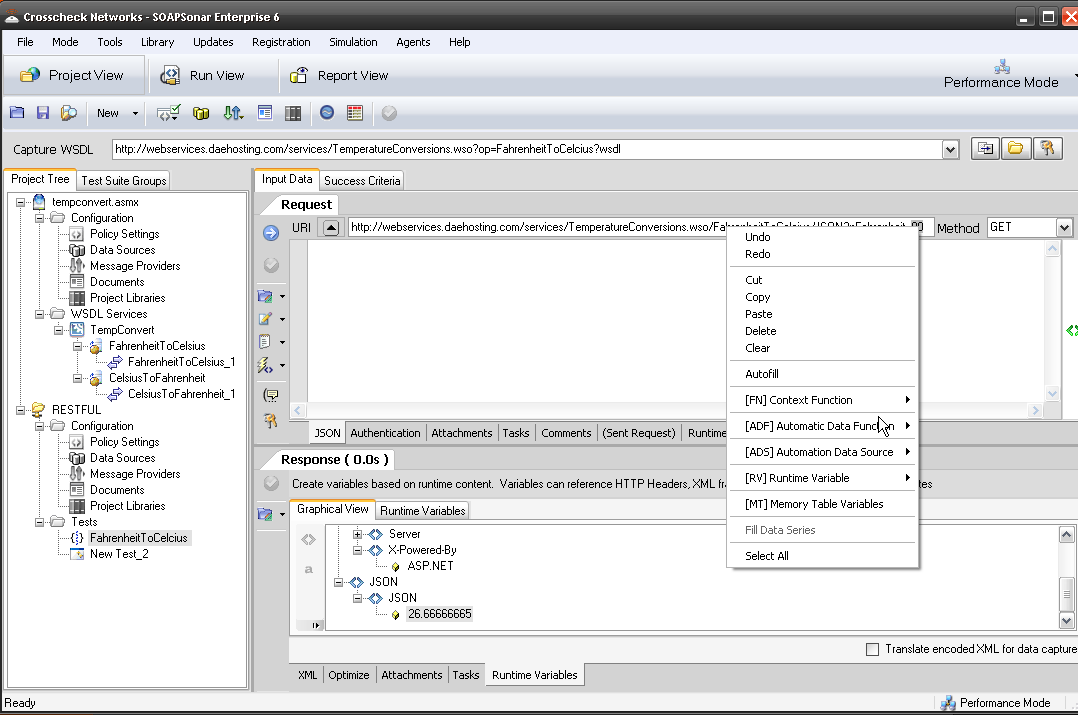

If you wish to use a variable with JSON, you right-click and add it in the URI (or body occasionally) in the same way.

Conclusion

Yes there are differences testing SOAP vs. REST when using SOAPSonar. The lightweight nature of JSON, that makes it attractive, requires closer ties to development and more rigorous documentation in order to ensure that the service is being “discovered” and tested. This means Testing and Development need a clearly defined process, de-mark, deliverables and co-operation between developers and testers.

I hope this helps those QA professionals as that are now testing JSON vs SOAP services to adapt to the changes quicker. Questions, Comments?